Methods for state estimation that rely on visual information are challenging on dynamic

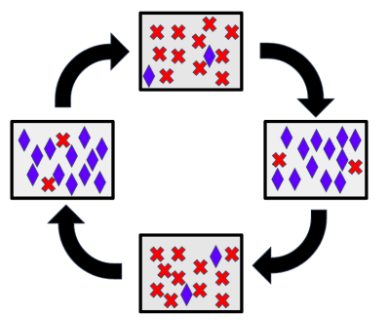

robots because of rapid changes in the viewing angle of onboard cameras and other sensors. Conventional techniques that perform state estimation over the full cycle of a gait suffer from poor performance, likely because information (ie landmarks) are not being considered in the proper sequence, e.g., landmarks that are visible when the robot is at the “top” of its gait cycle may not correspond to landmarks that are at the “bottom” of the gait cycle, causing estimators to diverge or produce poor estimates.

Our work leverages structure in the way that dynamic robots locomote to increase the feasibility of performing state estimation despite these challenges. In particular, our method takes advantage of the underlying periodic predictability that is often present in the motion of legged robots to improve the performance of the front-end (feature tracking) of a visual-inertial SLAM system. Inspired by previous work on coordinated mapping with multiple robots, our method performs multi-session SLAM on a single robot, where each session is responsible for mapping a distinct portion of the robot’s gait cycle. Our method outperforms several state-of-the-art methods for visual and visual-inertial SLAM in both a simulated environment and on data collected from a real-world quadrupedal robot.

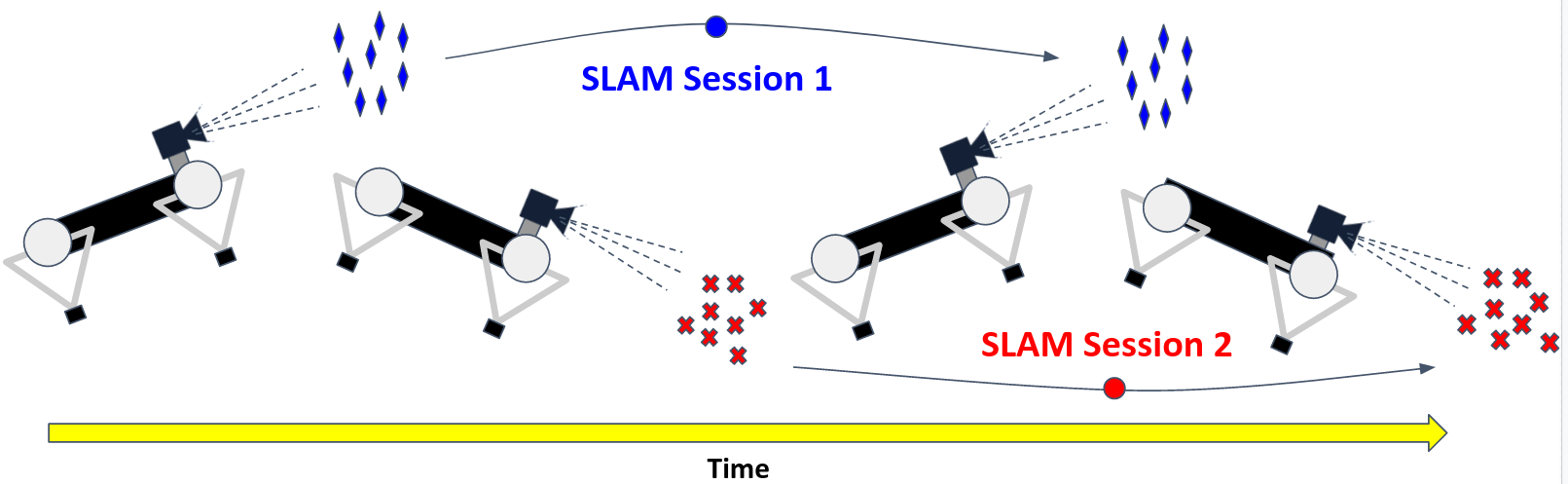

The above figure shows an example of how a legged robot that is periodically

pitching could maintain two separate SLAM sessions: one for when it is looking

upwards and one for when it is looking downwards. Our method constrains each

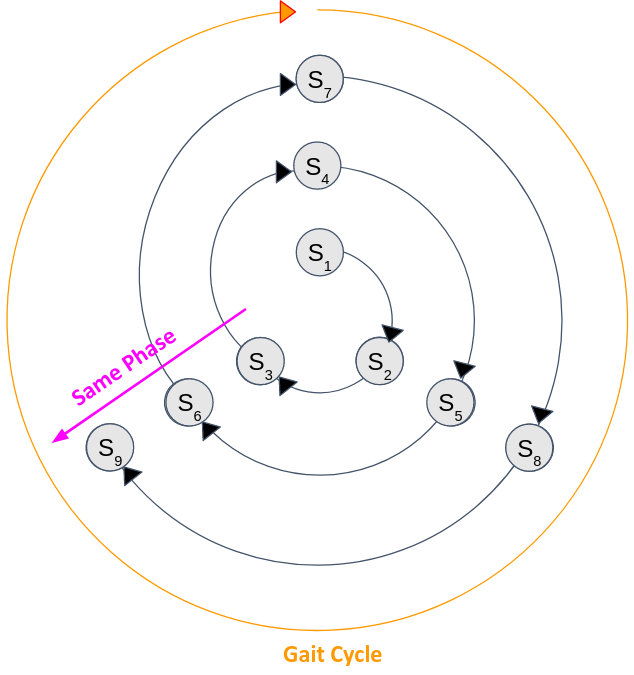

SLAM session running on the robot with relative pose constraints from inertial measurements from an IMU attached to the robot. By tightly coupling each individual SLAM session to one another in a factor graph based optimization framework, our method is able to produce a unified SLAM estimate that achieves greater combined performance than any single SLAM session. Converting the above figure to a cyclic representation, but with three SLAM sessions gives rise to the below figure:

We then construct a factor graph and use the machinery from factor graphs to efficiently run separate SLAM processes.