Automated Reverse Engineering of Buildings

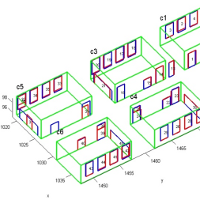

Laser scanners have proven to be an effective method for measuring the 3D shape of facilities, such as buildings and process plants. The results of scanning can be used to model the as-built or as-is conditions of a facility, which may be very different from the original design plans. Unfortunately, laser scanners produce data in the form of a set of points (known as a point cloud), but people using the data need higher-level information like the identity, location, and shape of walls, doors, and windows. In practice this high-level information is usually represented as a building information model (BIM). Our research is exploring methods to automatically create BIMs from point clouds and to address specific challenges in representing such “as-built” BIMs. Our modeling work utilizes techniques from the fields of computer vision and machine learning to automatically segment, model, and recognize the core components of a building’s envelope, including walls, floors, ceilings, doors, and windows. Our representation work is addressing problems specific to as-built BIMs. One such problem is the representation of occluded regions. Normally, when a room is scanned, some parts of the walls will be blocked by furniture or other obstructions. When the as-built BIM is created, the modeled walls are extended to fill in these occluded regions. However, the representation makes no distinction between regions that were filled in and regions that were measured directly. A downstream stakeholder may then make poor decisions based on incorrect assumptions about the accuracy of the data in these originally occluded regions.

Currently, we are investigating several different aspects of the problem of automatic reverse engineering of buildings:

- Context-based recognition of building components – How can we use context to recognize interior structures, such as walls, ceilings, floors, doors, doorways, and windows, in unmodified environments?

- Detailed wall modeling in highly cluttered environments – How can we model wall surfaces accurately in the face of occlusions from and clutter caused by furniture, fixtures, and other irrelevant objects?

- Transforming surface representations to volumetric ones – How can we convert the surface representations that are naturally derived from sensed data into volumetric representations needed by CAD and BIM software?

- Floor plan modeling – How can we estimate 2D floor-plans from sensed 3D data, and how should we evaluate the accuracy of automated floor plan modeling algorithms?

- Representation of As-built BIMs – How can the imperfections of sensed 3D data be represented within the context of the BIM framework, which was originally designed to handle only perfect data from CAD systems?

This project is funded in part, by the National Science Foundation (CMMI-0856558) and by the Pennsylvania Infrastructure Technology Alliance.

current head

current staff

current contact

past head

- Burcu Akinci

past staff

- Engin Anil

- Emiliano Perez Hernandez

- Antonio Adan Oliver

- Enrique Valero Rodriguez

- Siddharth Soundararajan